Prasanth Reddy

Welcome to this episode of WaveLength, where we discuss trends, opinions, and goings-on in the space where enterprise software developers lurk. WaveMaker combines open standards, component architecture, and low code technologies to offer enterprise software developers a high-productivity, high-speed platform for custom software implementations. With AI added to the mix, there are many opinions on how it can sharpen our focus even more on improving the iterative developer experience in custom software implementations. We spoke to Prasanth Reddy, senior director of product management, on what he thinks WaveMaker should do next.

WaveMaker: We have always been in the business of reducing the grunt work involved in writing software applications. Isn’t all this AI buzz more of the same?

Prasanth Reddy: Reducing grunt work is an outcome. AI is really about increasing the level of abstraction. If you consider abstraction a ladder, WaveMaker’s existing widget library is the first rung. What we are doing with AI is moving up the ladder of abstraction. This is a way we hope to get closer to the intent in the user’s mind and convert that into working WaveMaker app code.

So what I’m telling users is: don’t think about tables and lists anymore. Think about what you want to build. You want a leave management app with the usual approvals, privileges, the leaves you can take, and so on, with some analytics thrown in. Where do you start if not from some boxes and arrows on the whiteboard?

WM: We can take the abstraction higher than just canned interaction components and workflows, is what you are saying.

PR: Yes. So, AI has taken the abstraction level further up. And that is where we are now with using it in low code. We leverage LLMs (large language models) to convert that intent into working code. It also means we find the right LLMs to use, right?

Our philosophy has always been moving the abstraction level up so people (developers) become more productive. With AI, we are moving even further into layers where users directly interface using their spoken language with a generic platform. We are not thinking of vertical solutions for finance or healthcare – many products do that – of providing a way for users to build their abstractions in prefabs (called packaged business components by some analysts).

WM: Products like Unqork and Temenos are domain-specific and are also abstracting. How is our approach different from a product with a domain model built in?

PR: Yeah, our approach has always been that we will be a generic product that enhances the productivity of ANY developer. It allows them to create their own abstractions. At the same time, a vertical product leans into a particular area and has entirely converted its product to address only those features that are relevant in, say finance, industry. Even the way the product is marketed and sold, all of that adds up to that vision. Our vision is more on increasing generic developer productivity.

WM: Having a domain model may clarify how AI can be used. WaveMaker aims to simplify life for professional developers, for whom higher-level abstractions are essentially use-case scenarios or specifications. But these are a wide variety and non-specific. How does this pan out for WaveMaker?

PR: Our strength has always been our user base of professional developers. We understand them well. We belong to the same tribe. We are now trying to swim upstream to expand our total addressable market by getting business analysts and designers onto the platform. After all, innovation does not happen in isolation, it happens at the point of interaction. The more meaningful we make that interaction, the higher the chances of closing the intent-to-code gap.

WM: Ah, this is really about team, not individual, productivity.

PR: I must state this here: this is not just about the productivity of individual developers but whole teams. Whether they are Figma users designing wireframes or business analysts writing specifications, the iteration with core developers is still very long and frustrating and riddled with losses in translation.

This is because of how abstractions have been set up in WaveMaker at the widget level. With AI, we are breaking through to a higher level of abstraction, opening our product out to more people but at the same time keeping the interaction with core developers meaningful. That’s when we feel we enable whole teams to become productive, not just individual developers. We expect this to result in unprecedented iteration velocity.

The iteration cycle between teams – whether designers and developers or business analysts and developers – the time it takes for the iteration to complete determines the time to market.

WM: Why AI? After all, the low code mantra has always been about abstraction, acceleration, and productivity.

PR: We couldn’t have done it at a generic level without AI. We would have had to pivot and choose a few domains and just completely lean into it. But now, we think we can achieve higher-level abstractions and continue taking a more horizontal approach – precisely what AI enables.

WM: You can go wide and deep at the same time.

PR: Yes, absolutely. And we are uniquely positioned because we already have a product that allows customers to develop their own abstractions. And now we have an AI play with ChatGPT (and similar) that is voraciously feeding on all kinds of data. We’re perfectly placed to put these two together and create a generic product that at once addresses a large amount of surface area. So that’s what I meant when I said “converting intent to code.

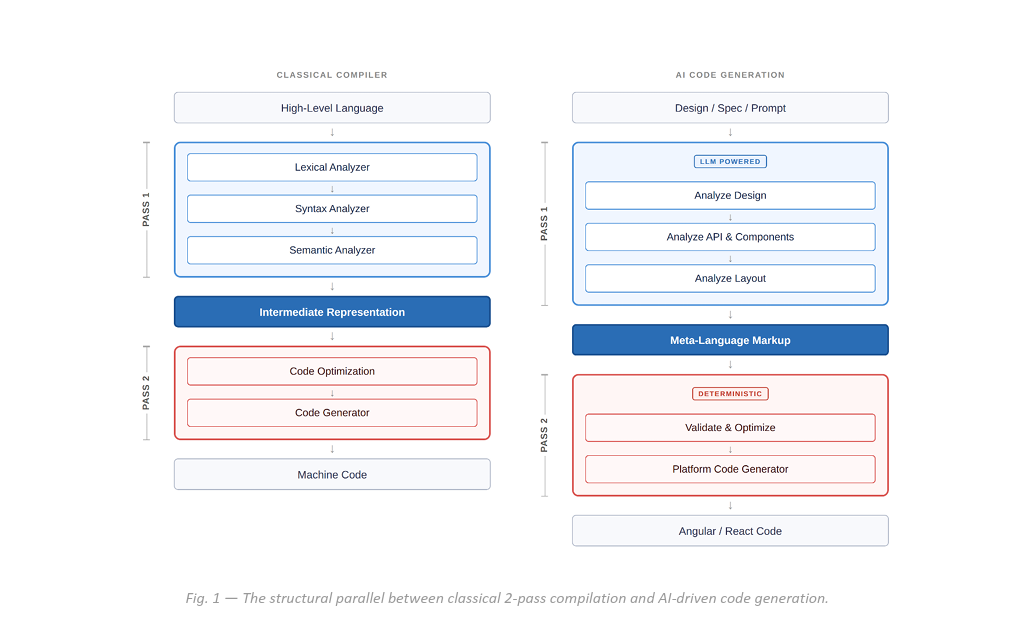

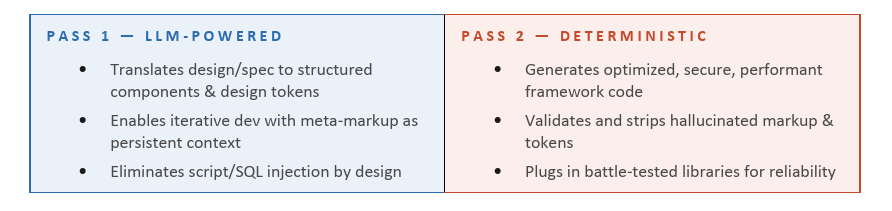

WM: Will we implement this as a one-pass activity that gives users a headstart? Or is it going to be with them through the lifecycle-that is, actually complete roundtrips?

PR: Well, even if you take a single iteration, AI involvement is getting more done because there is less lost in translation. This is particularly impactful when each feature is in a different sprint. We have seen this when working with ISV product teams on low code. To me, with every iteration, more gets done, there is less frustration less chasing of bugs, and higher chances of being pixel-perfect.

Today, when you look at iterations that involve hand-offs among business analysts, designers, and front-end developers, there is a loss in the quality of code output. This generates a long list of bugs, which generates grunt work, which burdens the team and affects their code quality. We have to break this cycle.

Reality is nobody wants to manage a list of issues or prioritise because that is the real grunt work. Everyone is talking about grunt work in coding, but the work that people actually hate is prioritising, maintaining lists, allocating work, who gets to do what, etc. If we reduce this, we will achieve a lot. We reduce management overheads and focus on real work.

WM: Our first step is to build a WaveMaker component plugin for Figma users. Will the approach hold when we open up to all of Figma? Will it be too wild to guarantee 99% accurate app code?

PR: The two worlds of designers and developers are different. The semantics are different. The developer side of the equation is more deterministic and syntax-driven, while the designer side – yes, they follow a process but is largely heuristic and imaginative. There is variety. But you really don’t need to be 99% accurate in the translation because our strength is our low code editor, where code can be fine-tuned or corrected easily. Even if I give you a leave management app with a table and you want a list, it can be easily changed on our studio’s canvas. Having said that, we have some guardrails.

WW: Round-trips will be cool, right?

PR: Though it will be cool to round-trip, I am skeptical about that. We need to think more. The customers we deal with do not want to subsume the designer’s role and play at the edge of user experience. They have teams that are dedicated to doing these things. Also, ideation and implementation happen in two different parts of the organisation. They do not want to short-circuit that. Not yet.

Actually, you should not be asking me about round trips. The question we should ask is: What happens if you shorten the iteration cycle and our tool lets you do eight iterations instead of four in the same given month? That’s more than acceleration. That’s the compounding of gains and value you’re building on top of what you already did. This brings immediate and real benefits to team productivity, not just unlocking an individual’s potential to round-trip

WM: That’s what WaveMaker AI’s goal is: help multi-disciplinary teams unlock their potential.

PR: Yes, because right now, WaveMaker is really designed for individual developers. Of course, teams using WaveMaker see overall productivity gains because of component reuse, templates, drag-n-drop interface, etc. But the real unlocking of value will happen when you’re able to bring in teams that are currently working together but are outside WaveMaker.

WM: Finally, do you think this constant demand for speed is tenable?

PR: Yes, the human potential is unlimited. It is the “iteration cycle to value” that constrains the speed. If you don’t see value, you won’t be interested. This has been the thing since the Industrial Revolution when groups of people worked together, and they just got better at working together. They got faster at creating something because they could, like I said, iterate and create value quickly.

WM: In the low code world, what’s the bottleneck for speed?

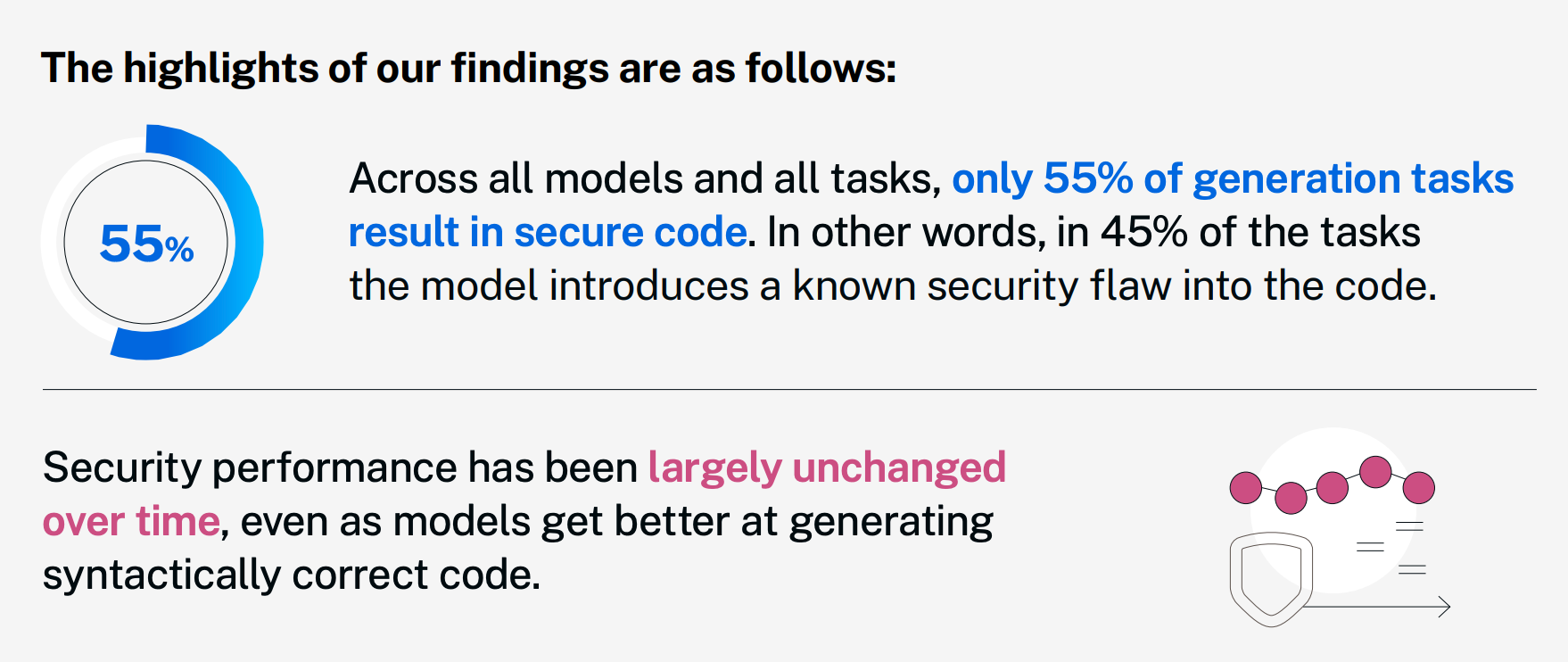

PR: There could be more than one. But the “Design to Code gap” is where we are at now. There are also compliance guidelines. Did you do the right thing by security? Did you do the right thing by accessibility? Answers to questions like that mean various guardrails have to be put in place when a project is being coded. So that in the end, you don’t pay the tax. But unlike design, these are deterministic and easy to set rules for.

WM: Assuming most want cookie-cutter UI, especially mobile, and my LLM model is well-trained on a million files, will iterations go away eventually?

PR: That’s difficult to imagine, though AI gets better at second-guessing as it evolves. But people still would want to iterate because it is a way to clarify what their intent is. There is a bit of wandering in all creative processes, which is never straight. “Design to code” iterations are how teams can meander, discover and pivot their way to an end product.

Coding up something and getting it to work is an intense process that does not leave a lot of time and mind space for more ideation. There is also resistance that grows to making changes after something is implemented. Having a designer role that is protected from this, with a bit of freedom, is a good thing to have in the team.

WM: While AI becomes better and better at second-guessing what users do and what they want, what lies ahead for WaveMaker?

PR: The key is how we work the abstraction ladder we spoke about in the beginning. Yes, in the shallow end of the pool, AI will democratize access to creating cookie-cutter apps. You may also have a GPT-powered phone that does everything and anything for you. Yeah, that could be one outcome. But in the deep end of the pool of developers, teams will continue to look for ways to deliver value with faster and more productive iterations. There is always room for providing more value. That’s where we dive in.

W: Thank you, Prasanth. That was an illuminating session of WaveLength.